To achieve peak operational agility, today’s enterprises are pivoting toward modularity. Organizations are moving beyond rigid job titles to embrace skills-first talent models while replacing solitary chatbots with collaborative multi-agent AI systems. By integrating specialized human expertise with domain-specific AI frameworks, leaders can slash management overhead, bridge niche skill gaps, and build a more resilient, high-velocity digital ecosystem.

In the US employment market, formal job titles are becoming secondary to specialized, validated skill sets—such as the combination of “Python Microservices” and “AI Ethics.”

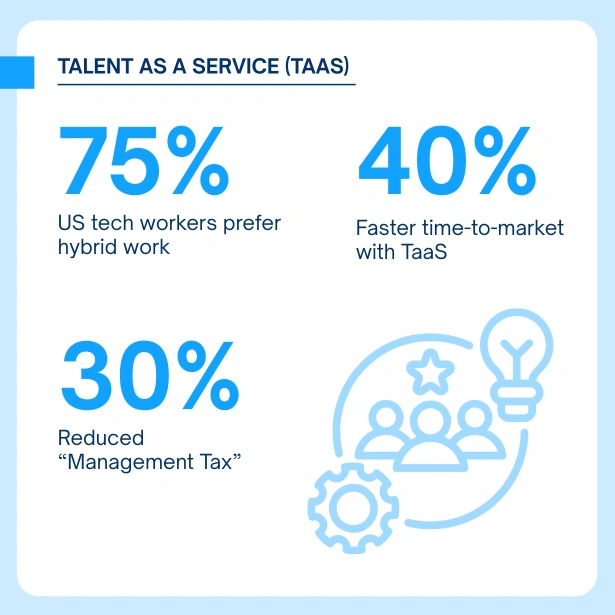

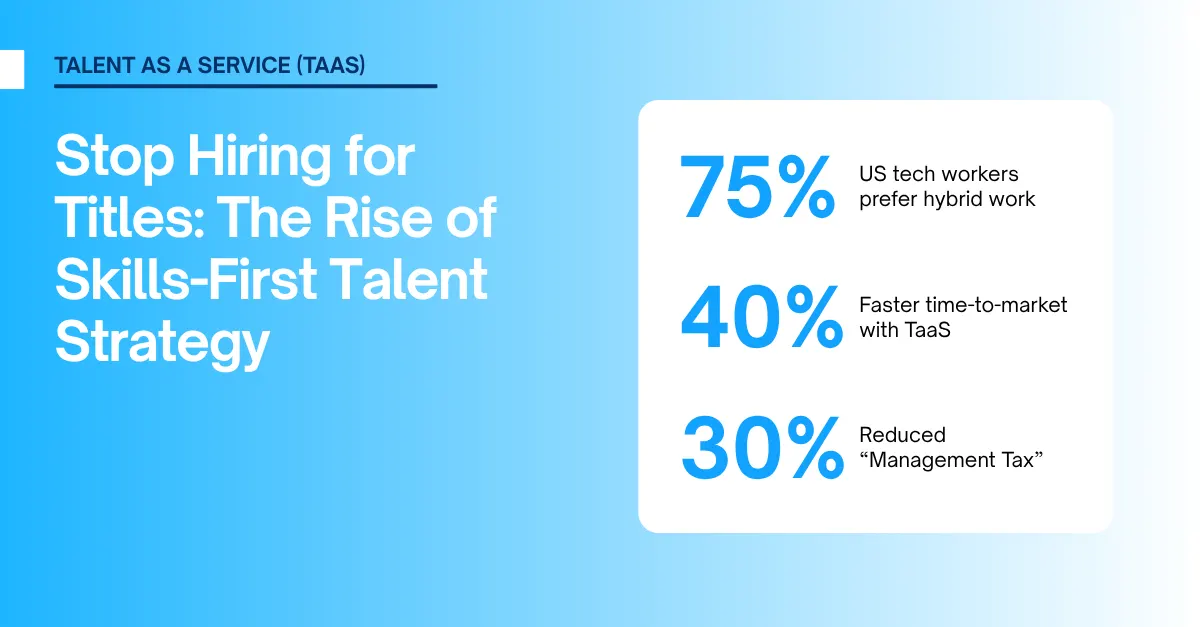

The focus is on effectively scaling hybrid environments. Instead of traditional staff augmentation, businesses are adopting a “Managed Team” model. In this setup, the Talent-as-a-Service (TaaS) provider handles the operational and cultural management of offshore or nearshore teams. This shift reduces the “management tax” on US-based tech leaders by up to 30%.

Data shows that 75% of US tech workers now prioritize hybrid work arrangements. Furthermore, businesses that leverage TaaS to bridge the “Niche Skill Gap” report a 40% faster time-to-market for new feature releases.

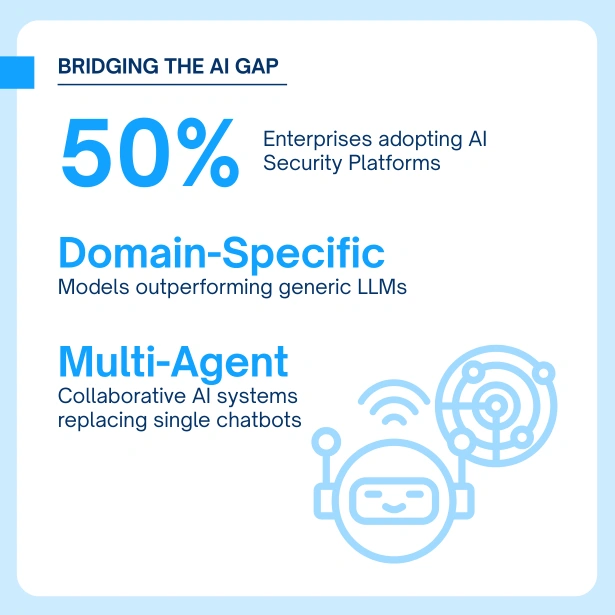

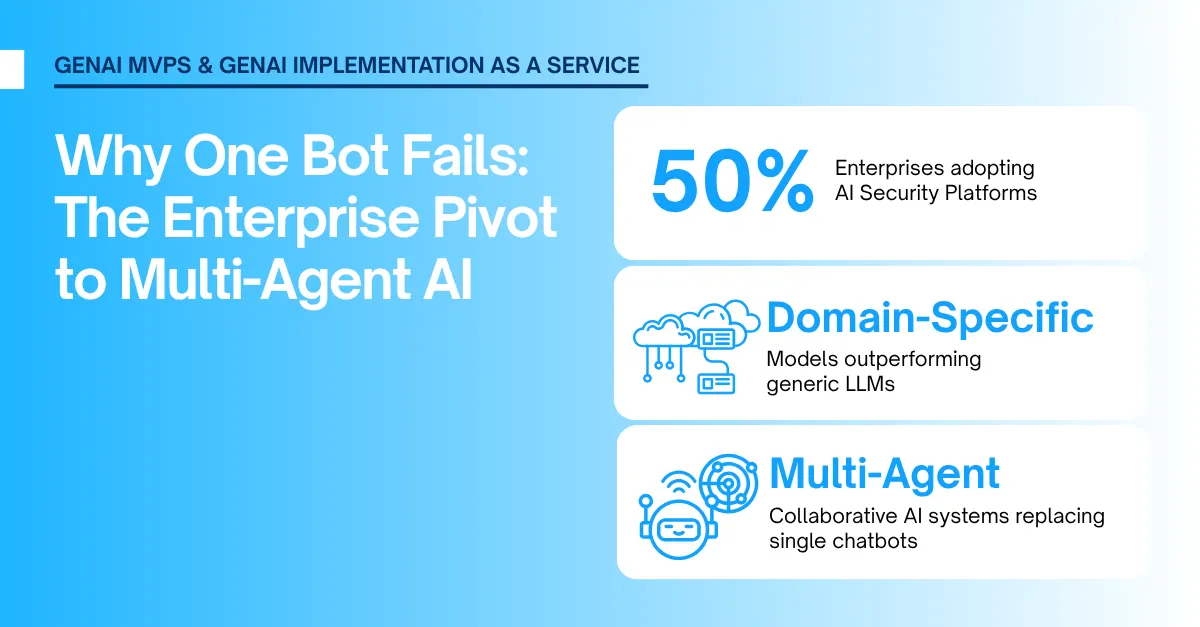

The era of the “single chatbot” is ending in 2026. Businesses are now implementing Multi-Agent Systems, where specialized AI agents collaborate to achieve complex, multi-step business objectives rather than simply answering prompts.

The focus has shifted toward DSLMs (Domain-Specific Language Models). To increase accuracy and eliminate “hallucination” rates, general-purpose models are being replaced by models trained on industry-specific data such as legal, medical, or engineering frameworks. This ensures that the AI’s output is grounded in professional reality rather than general internet data.

Security is the new priority for AI scaling. According to Gartner, by late 2026, over 50% of enterprises will deploy dedicated AI Security Platforms to protect their GenAI investments from “prompt injection” and “rogue agent” attacks. This move from general cybersecurity to AI-specific defense is critical for maintaining “Algorithmic Accountability” in a multi-agent environment.

According to Gartner, by late 2026, more than 50% of enterprises are expected to adopt dedicated AI security platforms to protect their generative AI initiatives. This projection signals a major shift in how organizations perceive AI risk, moving from traditional cybersecurity controls toward AI-specific protection mechanisms.

The growing reliance on AI agents, copilots, and autonomous workflows has introduced new threat vectors, including prompt injection, data exfiltration, model manipulation, and rogue-agent behavior. Unlike conventional attacks, these risks exploit how AI systems interpret instructions and interact with external data sources. As enterprises embed GenAI deeper into business-critical processes, AI security platforms are emerging as a foundational layer to ensure trust, compliance, and operational safety, much as endpoint and cloud security evolved in earlier technology cycles.

A Space for Thoughtful

A Space for Thoughtful