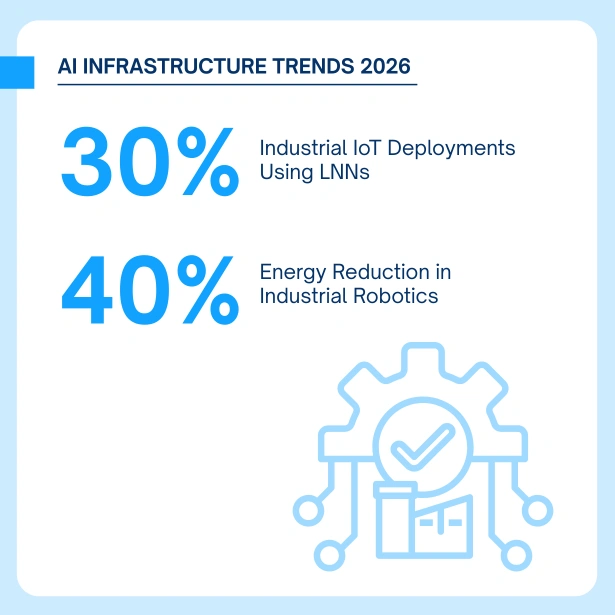

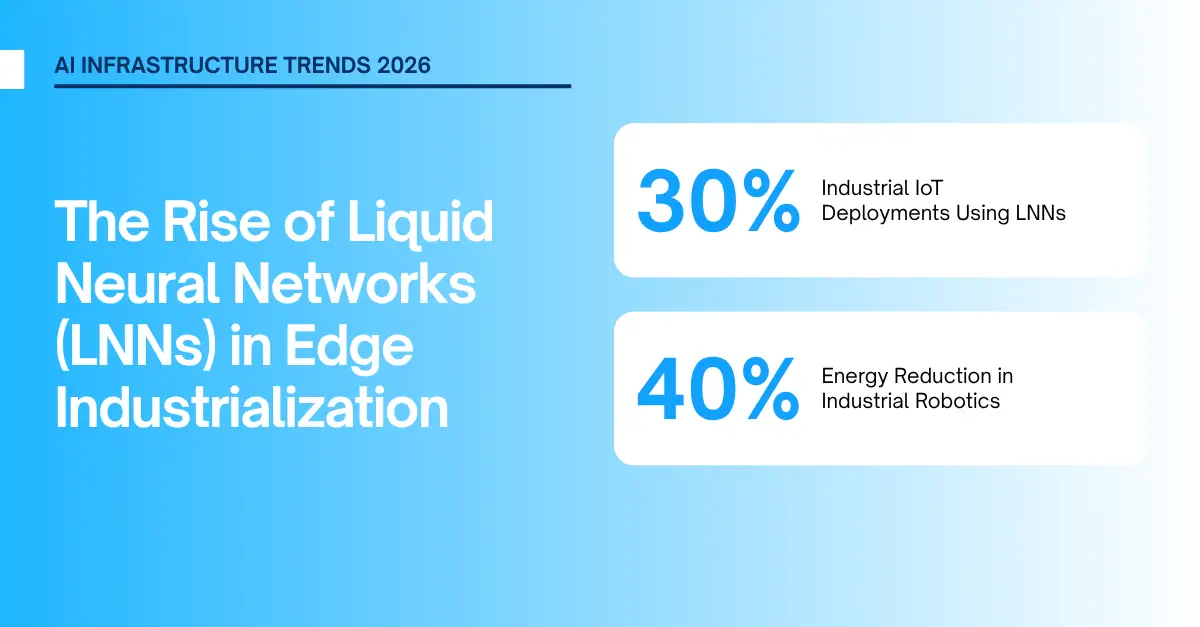

The Rise of Liquid Neural Networks (LNNs) in Edge Industrialization

The most profound impact of this shift is no longer theoretical; it’s hitting the floor in autonomous logistics and precision manufacturing. According to Accenture’s 2026 Industry X Report, liquid architectures have already slashed energy consumption in industrial robotics by a staggering 40%. In the energy sector, these models are the “brain” behind smart grids, allowing them to self-optimize against volatile demand patterns with sub-millisecond latency. We are witnessing a definitive move away from “brute-force” AI. In its place, we see the rise of context-aware systems that don’t just solve problems—they prioritize environmental sustainability and operational ROI in the world’s most mission-critical environments.

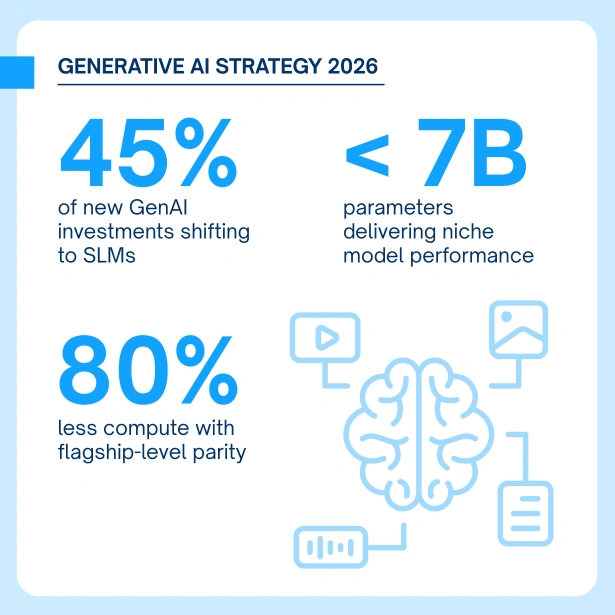

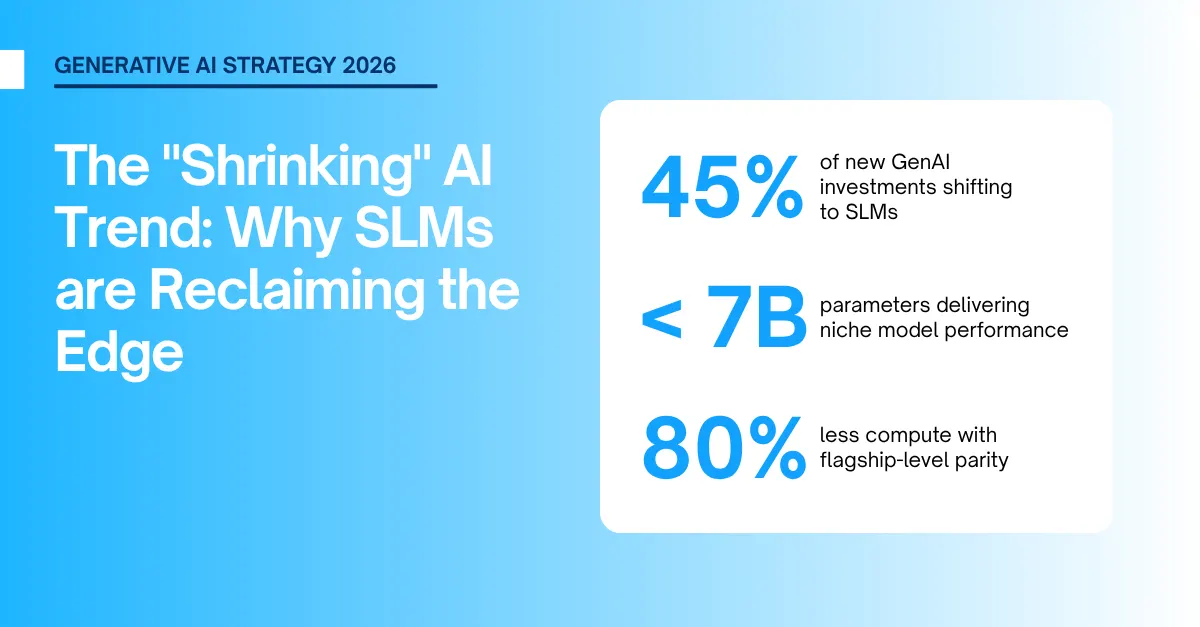

The “Shrinking” AI Trend: Why SLMs are Reclaiming the Edge

We are witnessing a massive budgetary pivot in the AI landscape. According to the IDC 2026 Global AI Spending Guide, 45% of new GenAI investments are now flowing toward Small Language Models (SLMs) rather than sprawling, frontier LLMs. These highly compressed, task-specific models—typically under 7 billion parameters—are no longer just “lite” versions; they are now outperforming generalist giants in specialized niche domains. The data from Stanford HAI’s 2026 Index confirm the breakthrough: SLMs have achieved performance parity with 2024-era flagship models while requiring 80% less computational overhead. For the modern enterprise, this isn’t just about saving on cloud costs—it’s about decentralizing intelligence and enabling high-performance, on-device AI.

Today’s CIOs are leveraging Small Language Models (SLMs) to tackle the dual headaches of data privacy and skyrocketing inference costs. By shifting deployments on-premises or to private VPCs, firms effectively neutralize the security risks inherent in sending proprietary data to third-party APIs. This isn’t just a technical tweak; it’s a strategic pivot toward “Privacy-by-Design” AI. This architecture empowers departments to run specialized, localized AI agents with full control over data residency. The end result? A far more predictable cost structure and a drastic reduction in the Total Cost of Ownership (TCO), proving that in 2026, the most secure AI is the one you own.

A Space for Thoughtful

A Space for Thoughtful